Spring is in bloom, bringing new and exciting developments in the Python world. The last preview release of Python 3.12 before the feature freeze, a new major version of pandas, pip and PyPI improvements, and PyCon US 2023 are a few of them.

Grab a cup of your favorite beverage, sit back comfortably in your chair, and enjoy a fresh dose of Python news from the past month!

Join Now: Click here to join the Real Python Newsletter and you'll never miss another Python tutorial, course update, or post.

Python 3.12.0 Alpha 7 Is Now Available

Python 3.12.0 alpha 7 became available to the public on April 4, marking the final alpha version before the planned transition to the beta phase, which will begin a partial feature freeze. Beyond this point, most development efforts will focus on fixing bugs and making small improvements without introducing significant changes in the codebase. But existing features could be changed or dropped until the release candidate phase.

While we’re still a few months away from the final release in October, we already have a pretty good idea about the most notable features that should make it into Python 3.12:

- Ever better error messages

- Support for the Linux

perfprofiler - A step toward multithreaded parallelism

- An

@overridedecorator for static typing - More precise

**kwargstyping - New syntax for specifying generic types

- Parentheses in

assertstatements - Various performance and memory optimizations

- Numerous deprecations and removals

But you don’t have to wait until the fall to get your hands on these upcoming features. You can check them out today by installing a pre-release version of Python, remembering that alpha and beta releases are solely meant for testing and experimenting. So, never use them in production!

If you happen to find something that isn’t working as expected, then don’t hesitate to submit a bug report through Python’s issue tracker on GitHub. Testing pre-release versions of Python is one of the reasons why they’re available to early adopters in the first place. The whole Python community will surely appreciate your help in making the language as stable and reliable as possible.

Note: For a full list of features implemented in the Python 3.12.0 alpha 7 release, have a glimpse at its changelog, which includes links to the respective GitHub tickets.

The Python 3.12.0 alpha 7 release brings us one step closer to the final version, but there’s still a lot of work to be done. These ongoing efforts may sometimes affect the official release schedule, so keep an eye on it and stay tuned for more updates in the coming months.

pandas 2.0 Receives a Major Update With PyArrow Integration

The popular Python data analysis and manipulation library pandas has recently released its latest version, pandas 2.0.0, followed by a patch release shortly after. These updates finalize a release candidate that became available a few months ago.

Historically, pandas has relied on NumPy as its back end for storing DataFrame and Series containers in memory. This release introduces an exciting new development in the form of optional PyArrow engine support, providing the Apache Arrow columnar data representation. However, nothing is changing by default, as the developers behind pandas aim to accommodate their large user base and avoid introducing breaking changes.

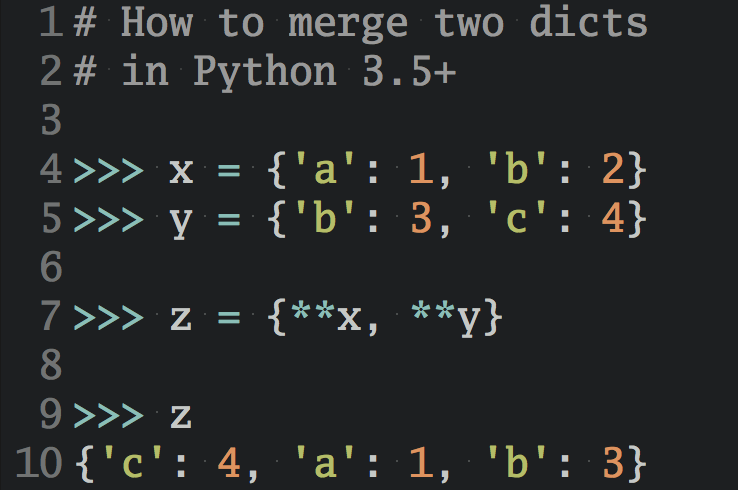

You now have the option to request the PyArrow back end instead of NumPy, as you can see in the following code snippets:

>>> import pandas as pd

>>> pd.Series([1, 2, None, 4], dtype="int64[pyarrow]")

0 1

1 2

2 <NA>

3 4

dtype: int64[pyarrow]

>>> df = pd.read_csv("file.csv", engine="pyarrow", dtype_backend="pyarrow")

>>> df.info()

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 21 entries, 0 to 20

Data columns (total 9 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 date 21 non-null date32[day][pyarrow]

1 transaction_no 21 non-null int64[pyarrow]

2 payment_method 21 non-null string[pyarrow]

3 category 21 non-null string[pyarrow]

4 item 21 non-null string[pyarrow]

5 qty 21 non-null double[pyarrow]

6 price 21 non-null string[pyarrow]

7 subtotal 21 non-null string[pyarrow]

8 comment 21 non-null string[pyarrow]

dtypes: date32[day][pyarrow](1), double[pyarrow](1), int64[pyarrow](1), string[pyarrow](6)

memory usage: 1.8 KB

Note that you’ll need to have PyArrow installed alongside pandas, usually in the same virtual environment, to use it as the default engine for representing data. While NumPy is well suited for numerical computing, PyArrow handles missing values, strings, and other data types better.

The decision to integrate the Apache Arrow in-memory data format into pandas provides four main benefits:

- Improved handling of missing values: PyArrow can retain integer data type while handling missing values. NumPy would instead convert them to floats to take advantage of the IEEE 754 NaN feature handled at the CPU level to signal missing values.

- Faster performance: PyArrow significantly speeds up the loading time of CSV files and optimizes string operations, among many other improvements.

- Greater interoperability: Apache Arrow serves as a back end for other data manipulation tools, such as R, Spark, and Polars, enabling efficient data sharing between them with little memory overhead.

- Enhanced data types: Arrow’s data types are more advanced and efficient than NumPy’s, providing better support for string manipulation, date and time handling, and Boolean storage, for example.

To learn more about pandas 2.0 and its features, read the official release notes, which include a detailed commit log. You can also check out the blog post from one of the pandas core developers or watch a YouTube video discussing the topic. With the integration of PyArrow in pandas 2.0, users can expect a much more powerful and performant data manipulation experience in their projects. You can also listen to The Real Python Podcast Episode 167: Exploring pandas 2.0 & Targets for Apache Arrow.

pip 23.1 Gets an Improved Dependency Resolver

Up until recently, pip would remain indifferent about conflicting dependency version constraints in Python projects. This could lead to broken installations from arbitrarily installing one of many incompatible versions of the same transitive dependency required by other dependencies. The user wouldn’t know about the problem until they experienced a runtime error.

Sometimes, dependency conflicts could occur even when nothing in the project itself had changed. That was often the case with so-called unpinned dependencies, which didn’t specify their versions. In such a case, the pip command would install the newest release, which might conflict with other dependencies in the project.

Trying to resolve such an inconsistent combination of packages by hand often proved difficult, which led to the coining of the term dependency hell.

Note: In the meantime, the Python community developed a few third-party dependency managers, such as Pipenv and Poetry, as pip alternatives that could deal with more complex dependency graphs.

The situation improved with the introduction of a proper dependency resolver in pip version 20.3 back in 2020. As a result, the tool became more strict and consistent, refusing to install libraries that conflicted and finding correct solutions if they existed. But in rare cases, the new dependency resolver could get stuck for a really long time, noticeably increasing the installation time.

In April 2023, the pip team released version 23.1 of the Python package installer to addresses this problem and bring even more improvements to their dependency resolver. In particular, they made the backtracking algorithm much faster, which helped solve many outstanding issues in the tool’s issue tracker.

If you’re not yet on the latest version of pip, then you can upgrade it by issuing the following command in the terminal:

$ python -m pip install --upgrade pip

This is a welcome improvement to the Python package management ecosystem, and it should make managing complex Python dependencies much easier.

PyCon US 2023 Celebrates Its Twentieth Anniversary

The biggest annual conference devoted to the Python programming language in the world, PyCon US, celebrated its twentieth anniversary this year. The conference has grown significantly since it began in 2003 and has become one of the largest gatherings of the worldwide Python community.

This year’s conference was held both online and in person, with health and safety guidelines in place due to the ongoing COVID-19 pandemic at the time. Like last year, the on-site event took place in Salt Lake City, Utah, returning to the same venue. The conference started a bit earlier this year, lasting from April 19 to April 27.

For the second year in a row, Real Python had a booth at PyCon, located at the back of the expo hall, where anyone could stop by to collect swag, shake hands, or have a chat with some of our team:

It was fantastic to meet our readers, subscribers, podcast listeners, and Office Hours attendees. Thank you so much for coming up to us at the Real Python booth and saying hi. We appreciate your questions and suggestions, as well as your sharing what you like about the site!

PyCon US 2023 kicked off with a fun throwback video showcasing people’s pictures and personal stories from the previous conferences they attended. Attending PyCon US seems to be addictive because many familiar faces made an appearance in the video. Martin, one of our colleagues, can attest to that, as his adorable one-year-old has already gone twice!

There were almost ninety talks to choose from, covering a wide range of topics from web development to data science. The talks were divided into five tracks, including a Spanish-language series, all taking place at the same time in different rooms. You can check out the full talk schedule to get a sense of what the conference had to offer.

Don’t feel bad if you didn’t make it to PyCon US this year. Even the attendees couldn’t possibly follow all the talks and events happening at the conference! Keep an eye on the official PyCon US YouTube channel, which usually releases the conference video recordings in batches within a month or two after the event. In the meantime, you can take a sneak peek at the best talks from PyCon US 2023 for data scientists.

Even though this year’s PyCon US has ended, preparations for the next two editions are already in the works. Both PyCon US 2024 and PyCon US 2025 will take place in Pittsburgh, Pennsylvania, which is closer to the east coast, making it more accessible to European Pythonistas. Regardless of where you’re from, we hope to see you on a PyCon US in the future!

PyPI Introduces Trusted Publishers and Organization Accounts

On their recently launched blog, the Python Package Index (PyPI) announced the introduction of two new features that are now available in the official repository of Python packages:

The first feature makes publishing Python packages to PyPI more secure. Maintainers are now encouraged to take advantage of the OpenID Connect (OIDC) standard for authenticating their identity. This new authentication method can be especially helpful in automated continuous integration environments that would’ve previously required disclosing a username and password or an API token.

Sharing a long-lived secret like this with a third-party system always poses a risk due to possible leakage. Therefore, in addition to the traditional authentication methods, it’s now also possible to configure a specific project on PyPI to trust a given third party, who’ll act as an identity provider. As of now, the only trusted publisher that PyPI supports is GitHub, but that will likely change in the future.

The benefits of trusted publishing on PyPI are many:

This allows PyPI to verify and delegate trust to that identity, which is then authorized to request short-lived, tightly-scoped API tokens from PyPI. These API tokens never need to be stored or shared, rotate automatically by expiring quickly, and provide a verifiable link between a published package and its source. (Source)

(…)

Configuring and using a trusted publisher provides a ‘strong link’ between a project and its source repository, which can allow PyPI to verify related metadata, like the URL of a source repository for a project1. Additionally, publishing with a trusted publisher allows PyPI to correlate more information about where a given file was published from in a verifiable way. (Source)

Apart from these, using trusted publishers can be more convenient than manually creating and setting up an API token for each automation.

The recommended way of getting started with trusted publishing on PyPI is with the pypi-publish GitHub Action maintained by Python Package Authority (PyPA). The official PyPI documentation provides more details on publishing to PyPI with a trusted publisher.

The second feature that recently rolled out at PyPI is support for organization accounts, which users have been requesting for a long time. From a maintainer’s perspective, the main benefits of having these accounts are the ability to manage roles and permissions across an organization’s different projects as well as assembling users into teams for easier collaboration.

For PyPI, this new feature is expected to bring an additional income stream to help finance its operations amid the ever-growing number of users and contributors. Corporate teams must pay a small fee to adopt the organization accounts. On the other hand, community projects owned by hobbyists or non-profit organizations can start using them for free if they want.

Note: There won’t be any differences in terms of features or security between the paid and free subscriptions.

At the time of writing this article, organization accounts were available in closed beta, meaning you needed approval to use them. The PyPI documentation contains more information on organization accounts. Most importantly, there’s no obligation to use them at all.

Both of these new features will help ensure that PyPI is more secure, provides a better experience for the user, and can sustain itself in the long run.

The PSF Voices Concerns Over the EU’s Proposed Policies

The European Union’s planned Cyber Resilience Act and Product Liability Act have drawn criticism from the Python Software Foundation (PSF). The PSF agrees that it’s important to increase security and responsibility for European software users, but it thinks that overly expansive regulations risk unintentionally doing harm.

The new laws may potentially make open-source developers, including those who have never received payment for delivering software, accountable for how their components are used in someone else’s commercial product.

According to the PSF, increasing liability should shift to the organization that has a contract with the consumer. It also highlights the crucial role that open-source software repositories like the Python Packaging Index (PyPI) play in contemporary software development.

With that, PSF suggests that policymakers should follow the money instead of the code when assigning consumer liability and responsibility. It calls for more clarity in the proposed policies and the exemption of public software repositories that are offered as a public good to facilitate collaboration.

Finally, PSF notes that the European Union should carefully consider the impact of such policies on complex and vital ecosystems like Python before drafting landmark policies affecting open-source software development.

What’s Next for Python?

So, what’s your favorite piece of Python news from April? Did we miss anything notable? Have you taken Python 3.12 for a test drive already? Did you attend PyCon US this year? Do you share PSF’s concerns over the proposed EU law?

Let us know in the comments, and happy Pythoning!

Join Now: Click here to join the Real Python Newsletter and you'll never miss another Python tutorial, course update, or post.